Table of Contents

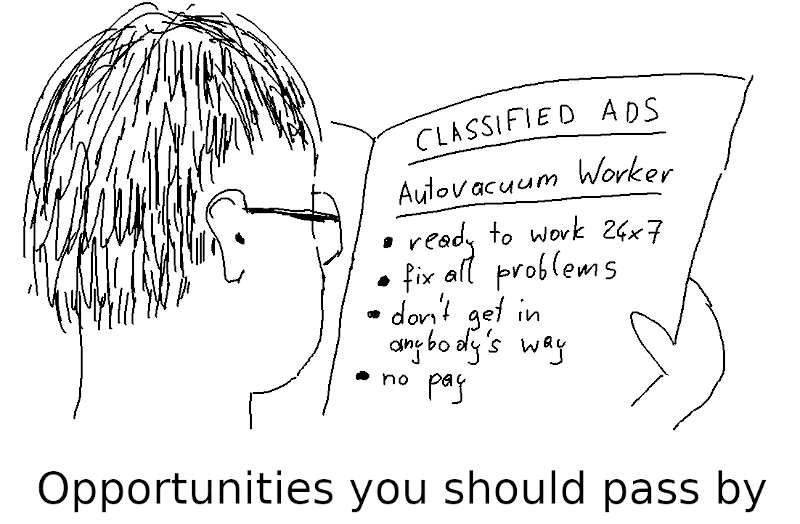

In many PostgreSQL databases, you never have to think or worry about tuning autovacuum. It runs automatically in the background and cleans up without getting in your way.

But sometimes the default configuration is not good enough, and you have to tune autovacuum to make it work properly. This article presents some typical problem scenarios and describes what to do in these cases.

There are many autovacuum configuration parameters, which makes tuning complicated. The main reason is that autovacuum has many different tasks. In a way, autovacuum has to fix all the problems arising from PostgreSQL's Multiversioning Concurrency Control (MVCC) implementation:

UPDATE or DELETE operationsANALYZE runs to keep the table statistics updatedDepending on which of these functionalities cause a problem, you need different approaches to tuning autovacuum.

The best-known autovacuum task is cleaning up of dead tuples from UPDATE or DELETE operations. If autovacuum cannot keep up with cleaning up dead tuples, you should follow these three tuning steps:

Check the known reasons that keep vacuum from removing dead tuples. Most often, the culprit are long running transactions. Unless you can remove these obstacles, tuning autovacuum will be useless.

If you cannot fight the problem at its root, you can use the configuration parameter idle_in_transaction_session_timeout to have PostgreSQL terminate sessions that stay “idle in transaction” for too long. That causes errors on the client side, but may be justified if you have no other way to keep your database operational. Similarly, to fight long-running queries, you can use statement_timeout.

If autovacuum cannot keep up with cleaning up dead tuples, the solution is to make it work faster. This may seem obvious, but many people fall into the trap of thinking that making autovacuum start earlier or run more often will solve the problem.

VACUUM is a resource-intensive operation, so autovacuum by default operates deliberately slowly. The goal is to have it work in the background without being in the way of normal database operation. But if your workload creates lots of dead tuples, you will have to make it more aggressive:

autovacuum_vacuum_cost_limit from its default value of 200 (this is the gentle method)autovacuum_vacuum_cost_delay from its default value of 2 (in older versions: 20!) milliseconds (this is the effective method)Setting autovacuum_vacuum_cost_delay to 0 will make autovacuum as fast as a manual VACUUM – that is, as fast as possible.

Since not all tables grow dead tuples at the same pace, it is usually best not to change the global setting in postgresql.conf, but to change the setting individually for busy tables:

|

1 |

ALTER TABLE busy_table SET (autovacuum_vacuum_cost_delay = 1); |

Partitioning a table can also help with getting the job done faster; see below for more.

If nothing else works, you have to see that fewer dead tuples are generated. Perhaps several UPDATEs to a single row could be combined to a single UPDATE?

Often you can significantly reduce the number of dead tuples by using “HOT updates”:

fillfactor for the table to a value less than 100, so that INSERTs leave some free space in each blockUPDATE is indexedThen any SELECT or DML statement can clean up dead tuples, and there is less need for VACUUM.

The expensive part of an index scan is looking up the actual table rows. If all columns you want are in the index, it should not be necessary to visit the table at all. But in PostgreSQL you also have to check if a tuple is visible or not, and that information is only stored in the table.

To work around that, PostgreSQL has a “visibility map” for each table. If a table block is marked as “all visible” in the visibility map, you don't have to visit the table for the visibility information.

So to get true index-only scans, autovacuum has to process the table and update the visibility map frequently. How you configure autovacuum for that depends on the kind of data modifications the query receives:

UPDATEs or DELETEsFor that, you reduce autovacuum_vacuum_scale_factor for the table, for example

|

1 |

ALTER TABLE mytable SET (autovacuum_vacuum_scale_factor = 0.01); |

It may be a good idea to also speed up autovacuum as described above.

INSERTsThis is simple from v13 on: tune autovacuum_vacuum_insert_scale_factor as shown above for autovacuum_vacuum_scale_factor.

For older PostgreSQL versions, the best you can do is to significantly lower autovacuum_freeze_max_age. The best value depends on the rate at which you consume transaction IDs. If you consume 100000 transaction IDs per day, and you want the table to be autovacuumed daily, you can set

|

1 |

ALTER TABLE insert_only SET (autovacuum_freeze_max_age = 100000); |

To measure the rate of transaction ID consumption, use the function txid_current() (or pg_current_xact_id() from v13 on) twice with a longer time interval in between and take the difference.

Normally, autovacuum takes care of that and starts a special “anti-warparound” autovacuum worker whenever the oldest transaction ID in a table is older than autovacuum_freeze_max_age transactions or the oldest multixact is older than autovacuum_multixact_freeze_max_age transactions.

Again, you have to make sure that there is nothing that blocks autovacuum from freezing old tuples and advancing pg_database.datfrozenxid and pg_database.datminmxid. Such blockers can be:

To prevent data corruption, use good hardware and always run the latest PostgreSQL minor release.

UPDATEs or DELETEs for anti-wraparound vacuumOn tables that receive UPDATEs or DELETEs, all that you have to do is to see that autovacuum is running fast enough to get done in time (see above).

INSERTs for anti-wraparound vacuumFrom PostgreSQL v13 on, there are no special considerations in this case, because you get regular autovacuum runs on such tables as well.

Before that, insert-only tables were problematic: since there are no dead tuples, normal autovacuum runs are never triggered. Then, as soon as autovacuum_freeze_max_age or autovacuum_multixact_freeze_max_age are exceeded, you may suddenly get a massive autovacuum run that freezes a whole large table, takes a long time and causes massive I/O.

To avoid that, reduce autovacuum_freeze_max_age for such a table:

|

1 |

ALTER TABLE insert_only SET (autovacuum_freeze_max_age = 10000000); |

With very big tables, it can be advisable to use partitioning. The advantage here is you can have several autovacuum workers working on several partitions in parallel, so that the partitioned table as a whole is done faster than a single autovacuum worker could.

If you have many partitions, you should increase autovacuum_max_workers, the maximum number of autovacuum workers.

Partitioning can also help with vacuuming tables that receive lots of updates, as long as the updates affect all partitions.

Updating table statistics is a “side job” of autovacuum.

You know that automatic statistics collection does not happen often enough if your query plans get better after a manual ANALYZE of the table.

In that case, you can lower autovacuum_analyze_scale_factor so that autoanalyze processes the table more often:

|

1 |

ALTER TABLE mytable SET (autovacuum_analyze_scale_factor = 0.02); |

An alternative is not to use the scale factor, but set autovacuum_analyze_threshold, so that table statistics are calculated whenever a fixed number of rows changes. For example, to configure a table to be analyzed whenever more than a million rows change:

|

1 2 3 4 |

ALTER TABLE mytable SET ( autovacuum_analyze_scale_factor = 0, autovacuum_analyze_threshold = 1000000 ); |

Depending on your specific problem and your PostgreSQL version, there are different tuning knobs to make autovacuum do its job correctly. The many tasks of autovacuum and the many configuration parameters don't make that any easier.

If the tips in this article are not enough for you, consider getting professional consulting.

+43 (0) 2622 93022-0

office@cybertec.at

You are currently viewing a placeholder content from Facebook. To access the actual content, click the button below. Please note that doing so will share data with third-party providers.

More InformationYou are currently viewing a placeholder content from X. To access the actual content, click the button below. Please note that doing so will share data with third-party providers.

More Information

Hi Laurenz. Quick question: Does an insert that gets rolled back result in a dead tuple?

Yes, certainly.