Table of Contents

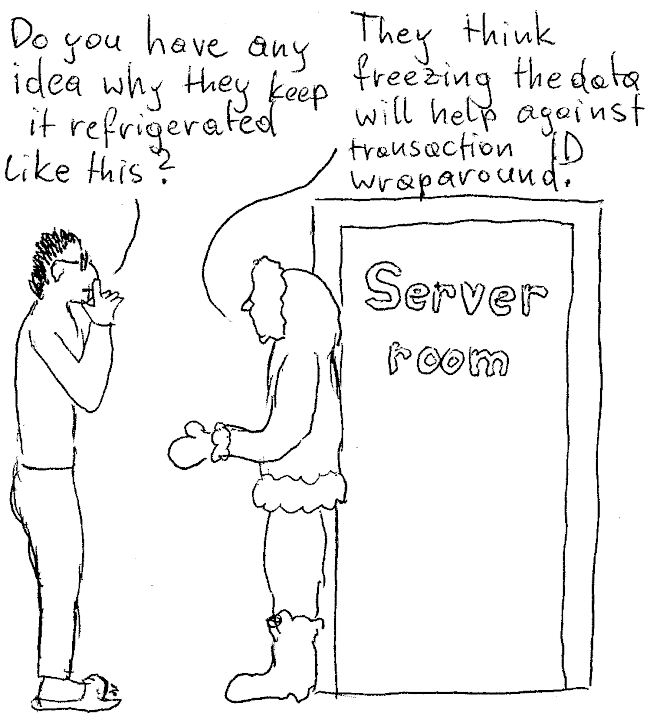

Most people are aware of transaction ID wraparound. The concept has been well explained in Hans' article, so I won't repeat all that here. But for most people it is an abstract concept, a bogeyman lurking in the dark around the corner. Many people know horror stories about anti-wraparound autovacuum tanking performance or databases that stop working, but who has ever seen actual data loss? I decided to face the beast (having developed a fondness for breaking things), and I learned something along the way. If you are curious, come along on this trip! We'll do some scary things that you shouldn't do to your databases at home.

No, it isn't. There are strong protections in PostgreSQL to prevent that. As you will see, I have to use evil tricks to overcome those protections. Still, these protections can disrupt operation, so it is good to be aware of the problem. But don't panic: usually, you won't even notice when your transaction IDs wrap around.

Since I will cause data corruption, I'll create a new cluster that I can discard afterwards:

|

1 |

initdb testdb |

Let's start the cluster. I will use a free, non-standard port and allow a prepared transaction. That prepared transaction will later be used to stir trouble.

|

1 2 3 4 5 |

pg_ctl start -o '-c port=5555' -o '-c unix_socket_directories=/tmp' -o '-c max_prepared_transactions=1' -D testdb -l logfile |

Now let's connect with

|

1 |

psql -h /tmp -p 5555 -d postgres |

Now we can create some data (that we will destroy later). I'll return xmin for each row I create, which stores the transaction ID of the creating transaction. Similarly, I'll return xmax for all deleting statements. For detailed information about xmax, you can read my article on that topic.

It is very important that we don't SELECT from the table at this stage, since that would set hint bits, which would spoil the effect.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 |

CREATE TABLE data (id integer PRIMARY KEY, x text); INSERT INTO data VALUES (1, 'hello') RETURNING xmin; xmin ══════ 725 (1 row) DELETE FROM data WHERE id = 1 RETURNING xmax; xmax ══════ 726 (1 row) INSERT INTO data VALUES (2, 'new') RETURNING xmin; xmin ══════ 727 (1 row) |

There are a few things that are known to keep autovacuum from cleaning up. In addition, data corruption can keep autovacuum from procesing a table. I decided to use a prepared transaction that holds a lock that keeps autovacuum from running:

|

1 2 3 |

BEGIN; LOCK data IN SHARE UPDATE EXCLUSIVE MODE; PREPARE TRANSACTION 'abc'; |

Prepared transactions are normally used for two-phase commit. They stay around until they are committed or rolled back, and even survive server restarts. A prepared transaction is a dangerous thing!

At this point, I would have to run about two billion transactions, but I am too lazy for that. Fortunately, PostgreSQL comes with a ready-made tool to facilitate data corruption: pg_resetwal.

There are some legitimate use cases of pg_resetwal for the normal user, for example, to change the WAL segment size. But in general, it is a tool for the expert, and is used for salvaging data from a broken PostgreSQL cluster. The idea would be to somehow get a broken instance so that it can be started, then dump what data you can. We will use it to wantonly advance the transaction ID. For that, it is important to perform a clean shutdown of the cluster, otherwise pg_resetwal has to be called with the option -f, which will likely destroy some data.

We will set the transaction ID to 231 - 10000000:

|

1 2 3 |

pg_ctl stop -D testdb pg_resetwal -x 2137483648 -D testdb |

We also have to “fake” a commit log file for this transaction:

|

1 |

dd if=/dev/zero of=testdb/pg_xact/07F6 bs=8192 count=15 |

Let's start the server as indicated above and connect. Then we consume a transaction ID. The cheapest way to do this is by calling the function pg_current_xact_id():

|

1 2 3 4 5 6 7 8 9 |

SELECT pg_current_xact_id(); WARNING: database 'template1' must be vacuumed within 10000715 transactions HINT: To avoid a database shutdown, execute a database-wide VACUUM in that database. You might also need to commit or roll back old prepared transactions, or drop stale replication slots. pg_current_xact_id ════════════════════ 2137483648 (1 row) |

This warning is shown when we are less than 40 million transactions from the point of data corruption. It is still easy to ignore, if you don't look into the log files.

Again, we stop the server and move on to transaction 231 - 1000000, and again we create a matching commit log file:

|

1 2 3 4 5 |

pg_ctl stop -D testdb pg_resetwal -x 2146483648 -D testdb dd if=/dev/zero of=testdb/pg_xact/07FF bs=8192 count=2 |

We start the server and try to get a transaction ID:

|

1 2 3 4 5 |

SELECT pg_current_xact_id(); ERROR: database is not accepting commands to avoid wraparound data loss in database 'template1' HINT: Stop the postmaster and vacuum that database in single-user mode. You might also need to commit or roll back old prepared transactions, or drop stale replication slots. |

Now it is game over for normal database activity. At this point, all you can do is to manually VACUUM the tables that still contain old, unfrozen tuples. Note that the hint is not quite accurate: since VACUUM doesn't consume a transaction ID, you don't need to start PostgreSQL in single-user mode for that. It is actually somewhat dangerous to use single-user mode, since that disarms the safety, and you can continue consuming transaction IDs. However, since the above error occurs three million transactions before data corruption, there is plenty of headroom for failed attempts.

Undeterred by all these warnings, we wrap around all the way to transaction 725. Note that we don't have to fake a commit log, because the old files are still there (autovacuum never could clean up anything).

|

1 |

pg_resetwal -x 725 -D testdb |

Now we start PostgreSQL in single-user mode and reuse transactions 725 to 727. Then we commit the prepared transaction to remove the obstacle:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 |

postgres --single -D testdb -c synchronous_commit=off -c max_prepared_transactions=1 postgres 2022-12-21 15:13:21.995 CET [46803] LOG: recovering prepared transaction 728 from shared memory PostgreSQL stand-alone backend 15.1 backend> SELECT pg_current_xact_id() 1: pg_current_xact_id (typeid = 5069, len = 8, typmod = -1, byval = t) ---- 1: pg_current_xact_id = '725' (typeid = 5069, len = 8, typmod = -1, byval = t) ---- backend> BEGIN backend> SELECT pg_current_xact_id() 1: pg_current_xact_id (typeid = 5069, len = 8, typmod = -1, byval = t) ---- 1: pg_current_xact_id = '726' (typeid = 5069, len = 8, typmod = -1, byval = t) ---- backend> ROLLBACK backend> BEGIN backend> SELECT pg_current_xact_id() 1: pg_current_xact_id (typeid = 5069, len = 8, typmod = -1, byval = t) ---- 1: pg_current_xact_id = '727' (typeid = 5069, len = 8, typmod = -1, byval = t) ---- backend> ROLLBACK backend> COMMIT PREPARED 'abc' |

We terminate the session with Ctrl+D, which will shut down PostgreSQL.

We start the server in the usual way. We don't need a prepared transaction any more:

|

1 2 3 4 5 6 |

pg_ctl start -o '-c port=5555' -o '-c unix_socket_directories=/tmp' -D testdb -l logfile psql -h /tmp -p 5555 -d postgres |

Let's look at the table:

|

1 2 3 4 5 6 |

TABLE data; id │ x ════╪═══════ 1 │ hello (1 row) |

Indeed, row 1, which we deleted in the beginning, is alive again. Row 2, which should be visible, is gone. What happened? By rolling back transactions 726 and 727, we undid the DELETE and INSERT. Note that row 2 is invisible, but still present:

|

1 2 3 |

INSERT INTO data VALUES (2, 'try'); ERROR: duplicate key value violates unique constraint 'data_pkey' DETAIL: Key (id)=(2) already exists. |

What a mess!

We can use the pageinspect extension to examine what is on disk:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

CREATE EXTENSION pageinspect; SELECT lp, t_ctid AS ctid, t_xmin AS xmin, t_xmax AS xmax, to_hex(t_infomask) AS infomask, to_hex(t_infomask2) AS infomask2, t_attrs AS attrs FROM heap_page_item_attrs(get_raw_page('data', 0), 'data'); lp │ ctid │ xmin │ xmax │ infomask │ infomask2 │ attrs ════╪═══════╪══════╪══════╪══════════╪═══════════╪═══════════════════════════════════ 1 │ (0,1) │ 725 │ 726 │ 902 │ 2002 │ {'\x01000000','\x0d68656c6c6f'} 2 │ (0,2) │ 727 │ 0 │ a02 │ 2 │ {'\x02000000','\x096e6577'} (2 rows) |

PostgreSQL v14 introduced a new contrib module pg_surgery. This module can be used to deal with damage like we have here. It has a function to kill (remove) table rows and a function to freeze rows (make them unconditionally visible). Let's try that:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 |

CREATE EXTENSION pg_surgery; SELECT heap_force_freeze('data', ARRAY['(0,2)'::tid]); heap_force_freeze ═══════════════════ (1 row) SELECT heap_force_kill('data', ARRAY['(0,1)'::tid]); heap_force_kill ═════════════════ (1 row) TABLE data; id │ x ════╪═════ 2 │ new (1 row) |

The functions in this module are dangerous. We only use them here because we already suffered damage. The idea is not to repair the damage and go on, but to get the database into a state where the data can be dumped and restored to a new, healthy cluster. You should never continue working with a PostgreSQL instance that has suffered data corruption, even if the corruption seems to be fixed. There might be invisible surprises lurking somewhere.

We have seen that it is impossible to suffer data corruption from transaction ID wraparound by normal means. We saw the dead rise. We got to use dangerous tools like pg_resetwal and pg_surgery. I hope you enjoyed the ride!

+43 (0) 2622 93022-0

office@cybertec.at

You are currently viewing a placeholder content from Facebook. To access the actual content, click the button below. Please note that doing so will share data with third-party providers.

More InformationYou are currently viewing a placeholder content from X. To access the actual content, click the button below. Please note that doing so will share data with third-party providers.

More Information